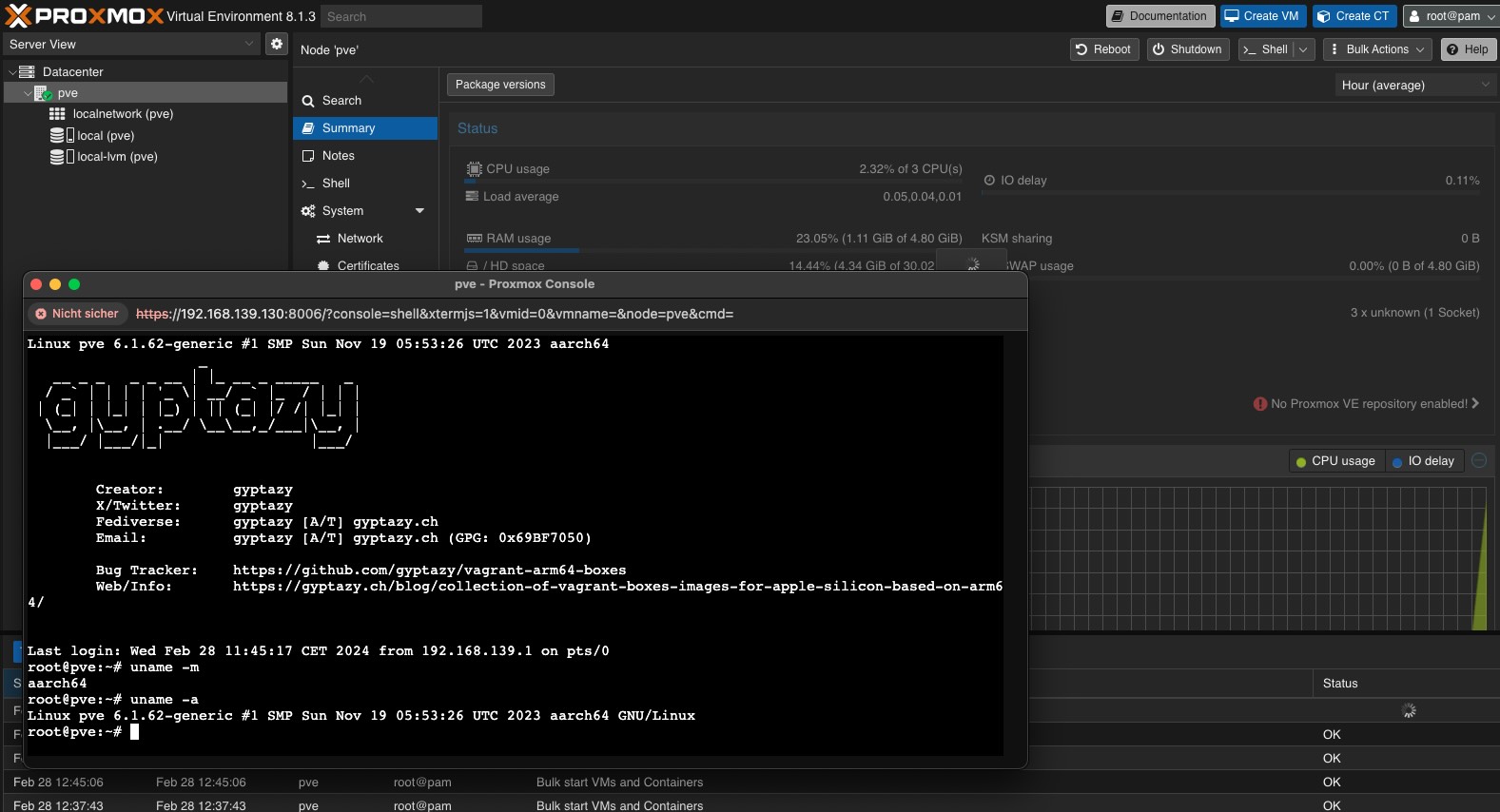

You can find more talks here: https://cdn.gyptazy.ch/tech-talks/

#secinfo #security #secops #securitypatchmanagement #patchmanagement #debian #proxmox #freebsd #bsd #rockylinux

Do I know anyone who's successfully gotten cephfs exported from a proxmox cephfs cluster into a VM?

I can get it mounted, all good. It can see contents there, yay! When it goes to write it gets a "Operation not permitted" error but creates an empty file with the right name.

What weird permissions bit do I have not set right?

boosted

boostedOK, before I search my old server in the basement, order RAM and SSDs and start pulling out my hair while testing, I'll #askfedi first:

How well does #openbsd as vm in #proxmox ?

I'm thinking of migrating my #nextcloud server to vm as I think the current hardware is dying.

I did some reading online but maybe someone here has first hand experience?

Today I pondered something: Proxmox and others boast native ZFS integration as one of their strengths. Many Proxmox features rely on ZFS's unique capabilities, and many setups are built around them. If Oracle were to send a cease and desist tomorrow, how would the situation unfold?

- Proxmox Backup 3.1.5

#arm64 #aarch64 #vagrant #vagrantcloud #applesilicon #vm #vmware #fusion #bsdcafe #netbsd10 #Proxmox #ProxmoxBackup #VagrantCollection #gyptazy

#homelab Hello there. I have bought a beelink s12 pro. It has 16g memory on board. However, I have heard that a #intel n100 can run with 32G.

Who has an intel n100 or beelink s12 pro and can tell me what 32G memory works well with it?

Also I heard rumours it will run 32G but can never use more than 16G.

Any tips apreciated and please boost!

#proxmox #linux #n100

Here you find more information about it and how to install/use it.

https://gyptazy.ch/blog/proxmox-new-import-wizard-for-migrating-vmware-esxi-virtual-machines/

Being too long absent in this topic feels like starting from scratch again…

Ok this is maybe a dumb me thing but ever since I migrated #HomeAssistant to an LXC on #Proxmox it has been losing HACS-downloaded components in restart. I used the same docker-compose file I used when I had it on metal, so I'm very confused.

Good morning, world!

It's 8:52 and it's time for my third coffee. I'd better stop here.

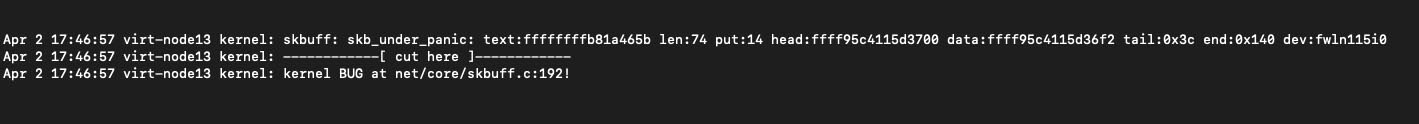

At the moment, I'm testing a Proxmox server - it suddenly started crashing every 2/3 days. I suspect something with the ram / disk controller, but I'm investigating.

#VMware is canceling the free ESXi that will mean the next generations will not be able to use it in their homelabs. This means other solutions like #Proxmox, #KVM or #Bhyve will educate the admins of the future. Citrix did the same thing with XEN server and killed an complete ecosystem even it was released for free again later people did not come back.

A client's server (a Dell r630) began experiencing issues (originally due to a faulty switch, but it led to new hardware purchases). It was running Proxmox, which I installed about three years ago, featuring SSD drives and 128 GB of RAM. A new server was set up around four months ago, but a water leak from the floor above flooded the rack, rendering the new hardware unusable. In a rush yesterday morning, I quickly reconfigured the "old" r630, installing FreeBSD 14.0-RELEASE and transferring VMs from the latest backup using zfs-send/receive. After launching the VMs on bhyve with some minor adjustments, everything was back in production. Just got a call saying the performance has "drastically improved." Previously, a specific end-of-month process on the r630 with Proxmox took about 30 minutes, while the new (flooded) server cut that down to 15 minutes, much to their delight. This morning, the same process only took 8 minutes, to their great surprise. I'm curious to investigate, but can't quite pinpoint how this is possible: my suspicion is that the process is mainly I/O bound, and the nvme driver in bhyve is exceptionally efficient in this aspect. For once, I'm taking credit for something I never expected. :-)

#TechLife #DataRecovery #ServerManagement #ITChallenges #bhyve #Proxmox #FreeBSD

Client call in panic mode. CRM software techs logged in and filled up the disk.

With 50GB free out of 100GB, I wondered how that's possible. They wanted to copy the entire directory to another for "safety".

Explained I could backup and restore if needed in seconds. But no, they had done as they said. Result? Disk filled, everything halted.

I'm out and about.

Proxmox doesn't let me modify virtual hardware through its Android app or limited mobile web page. Desktop site request doesn't help, layout's all messed up. In emergency mode, I set up an xrdp FreeBSD jail with Mate and Firefox, connected via VPN on my smartphone, accessed the client's Proxmox.

Added a virtual disk, extended the LVM, and the underlying XFS filesystem.

Total time? A blind 10 minutes.

Triumphantly, I call the client (who knew I was away from a computer) to report success.

The naive response: "Okay, but all this time just to expand a virtual disk?"

I decide it's better not to comment 😊

Ceph Cluster Hits 1 TiB/s Using AMD EPYC Genoa + NVMe Drives

Tomorrow morning, I'll need to migrate an Alpine Linux-based VPS from a physical host (Proxmox) to another one running FreeBSD.

My first instinct was to go with bhyve, but I'm considering a less conventional and perhaps more efficient approach. 😉

I appreciate bhyve for many reasons, but this afternoon, I have to give a shout-out to Proxmox.

I had to urgently recover some VPS from a host that had a breakdown, ensuring minimal disruption, and I had very little time.

Thanks to live restore, I managed to get both critical servers (each several hundred GB) up and running in just a few minutes, while copying the missing data in the background and restoring the rest at my own pace.

I can achieve similar results (if the original server is still operational) using snapshots, zfs-send, and zfs-receive, stopping, taking another snapshot, another send and incremental receive, then starting – or by sending and receiving from the backup server. However, you still need to wait for the copy to complete before starting the VM, which could take hours.

#Proxmox #Virtualization #ServerRecovery #LiveRestore #DataRecovery